AI for All: The Open-Source Revolution

Buckle up, because the AI revolution is not just coming—it's here, and it's open source. Open-source models are leveling the playing field and reshaping the future of technology and business.

Hi folks! 👋 Welcome to the AI Notebook! 📙

I'm KP, your guide through the ever-evolving world of artificial intelligence. Each week, I curate and break down the most impactful AI news and developments to help tech enthusiasts and business professionals like you stay ahead of the curve. Whether you're a seasoned AI pro or just AI-curious, this newsletter is your weekly dose of insights, analysis, and future-forward thinking. Ready to dive in? Let's explore this week's AI story!

In the fast-paced world of artificial intelligence, the past few weeks have been nothing short of revolutionary. We've witnessed an unprecedented deluge of open-source model releases, each pushing the boundaries of what's possible in AI. From tech giants to emerging startups, the AI community has been abuzz with announcements, code drops, and groundbreaking innovations.

The Open-Source Revolution

We've seen the release of several high-profile models:

Hugging Face's SmolLM

Mistral NeMo

Salesforce's xLAM

Apple's DCLM

Meta's Llama 3.1

And that's just scratching the surface. Each of these models brings something unique to the table, whether it's improved efficiency, novel architectures, or specialized capabilities.

Why This Matters

The significance of this open-source wave cannot be overstated. By making these powerful models freely available, these companies and organizations are:

Democratizing AI technology

Accelerating innovation in the field

Challenging the status quo of proprietary AI systems

This surge in open-source releases is not just about altruism—it's a strategic move that could reshape the AI landscape. It's fostering a new era of collaboration, where developers, researchers, and companies can build upon each other's work, potentially leading to breakthroughs we can't yet imagine.

What's Ahead

In this article, we'll dive deep into each of these models, exploring their unique features, potential applications, and what they mean for the future of AI. We'll also examine the broader implications of this open-source trend and what it might mean for established players in the AI industry.

Buckle up, because the AI revolution is not just coming—it's here, and it's open source.

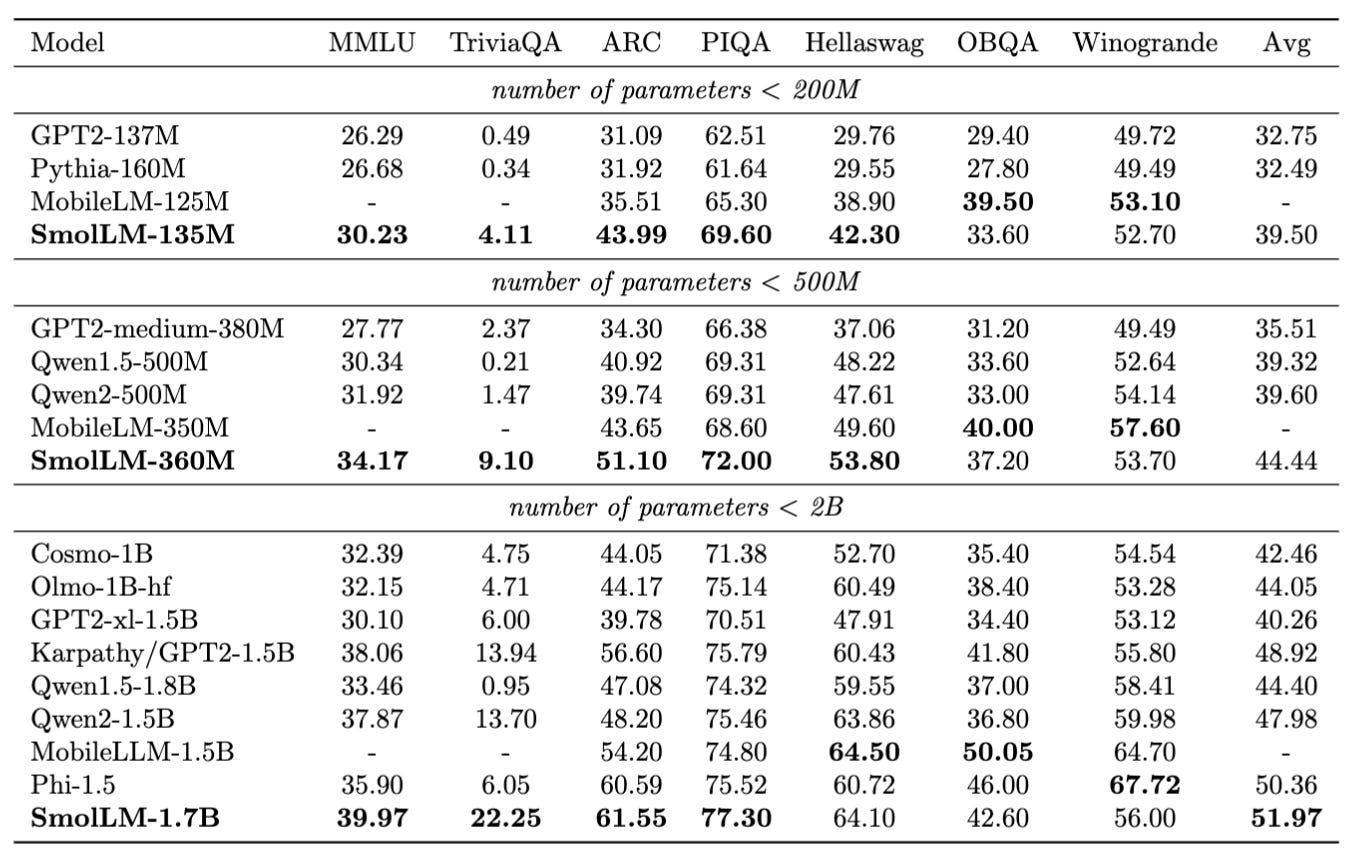

Hugging Face's SmolLM

Hugging Face introduced SmolLM, a family of compact language models that could reshape the edge computing and mobile AI sectors.

SmolLM offers a tiered approach to meet varied compute needs:

SmolLM-135M: 135 million parameters

SmolLM-360M: 360 million parameters

SmolLM-1.7B: 1.7 billion parameters

The emergence of small models addresses several key market trends:

Accessibility: Bringing AI capabilities to personal devices without the need for expensive hardware.

Privacy Concerns: Enabling AI applications that respect user privacy by processing data locally.

Environmental Considerations: Reducing the carbon footprint associated with large-scale AI deployments.

These outperform similar offerings from Microsoft, Meta and Alibaba's Qwen in performance.

The models are trained on high-quality mix of web and synthetic data, SmolLM-Corpus, which includes:

Cosmopedia v2: 28B tokens of synthetic content

Python-Edu: 4B tokens of educational Python samples

FineWeb-Edu: 220B tokens of curated web content

By open-sourcing the entire development process, Hugging Face reinforces its position as a leader in the open-source AI community.

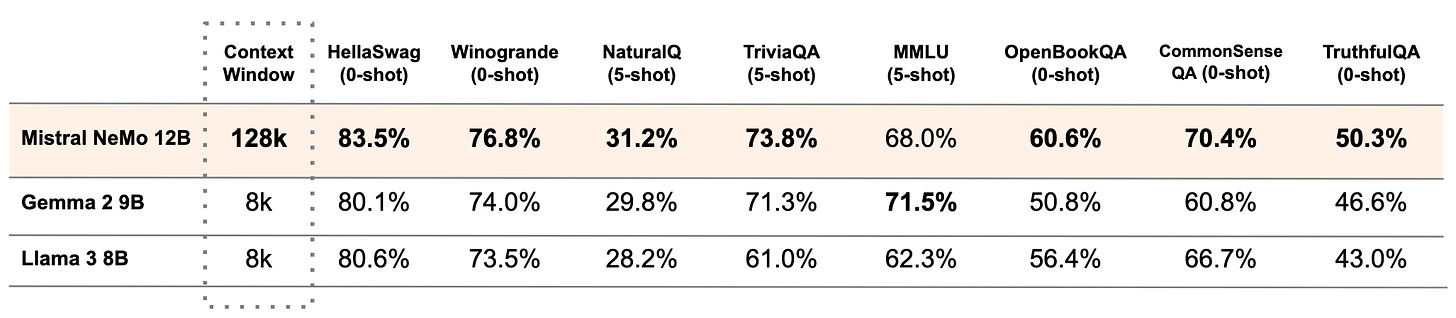

Mistral NeMo

Mistral AI, in collaboration with NVIDIA, has released Mistral NeMo, a 12B parameter model. With a 128K token context length, Mistral NeMo can process and understand vast amounts of information, ensuring highly contextual and accurate outputs.

Multilingual Prowess: It is built for global, multilingual applications. It demonstrates strength in English, French, German, Spanish, Italian, Portuguese, Chinese, Japanese, Korean, Arabic, and Hindi.

It introduces Tekken, a new tokenizer based on Tiktoken. Notably, it outperforms the Llama 3 tokenizer in text compression for approximately 85% of all languages, marking a significant leap in multilingual processing capabilities.

Tokenization is fundamental to how LLMs process and generate text. It serves as both the first and last step in text processing and modeling. It is the process of breaking down text into smaller subword units, called tokens. It allows text to be represented as numbers, making it understandable to the model.

Mistral AI has open-sourced their tokenizer. Trained on over 100 languages, Tekken demonstrates superior efficiency in compressing both natural language text and source code compared to the SentencePiece tokenizer used in previous Mistral models.

Versatile Performance: The model excels in various tasks, including multi-turn conversations, mathematical operations, common sense reasoning, world knowledge application and coding.

Targeted for Desktop Computing: Positions itself between massive cloud models and compact mobile AI solutions.

Customization and Deployment: Developers can easily customize and deploy Mistral NeMo for enterprise applications, particularly useful for:

Chatbots

Multilingual tasks

Coding assistance

Text summarization

Function Calling: It boasts of advanced agentic capabilities, including built-in function calling and JSON output generation. It is trained specifically for function-calling capability, enhancing its utility in various applications.

Function calling allows the models to connect to external tools.

Salesforce's xLAM

Salesforce has unveiled xLAM-1B, 1 billion parameter model that outperforms much larger competitors. It surpasses GPT-3.5-Turbo and Claude-3 Haiku in function-calling tasks.

Salesforce's xLAM-1B model owes much of its success to APIGen, an innovative data generation pipeline.

APIGen represents a shift from the "more data, bigger models" paradigm to "better data, smarter models." This approach not only yields impressive performance but also aligns with growing demands for efficient, cost-effective AI solutions. By focusing on data quality and diversity, Salesforce has created a framework that could redefine AI development practices across the industry.

Apple's DCLM

Apple has made a significant entry into the open-source AI arena with DataComp for Language Models(DCLM) project, setting a new standard for transparency and performance in language models.

DataComp is a collaborative effort, including Apple, University of Washington, Tel Aviv University and Toyota Institute of Research, aimed at designing high-quality datasets for training AI models, particularly in the multimodal domain. The concept is straightforward yet powerful:

Standardization: Use a standardized framework with fixed model architectures, training code, hyperparameters, and evaluations

Effective data curation: Enables systematic comparison of data curation strategies

The result: DCLM-Baseline, a dataset used to train the new DCLM models

Apple has released a family of open DCLM models:

DCLM-Baseline-7B:

7 billion parameters, trained on 2.5 trillion tokens

Context length of 2048 tokens

Outperforms Mistral-7B and approaches the performance of Llama 3 8B, Gemma, and Phi-3 in the MMLU benchmark

DCLM-1.4B, trained jointly with Toyota Research Insitute:

1.4 billion parameters, trained on 2.6 trillion tokens

Significantly outperforms similar models like Hugging Face's SmolLM 1.7B, Alibaba's Qwen 2B, and Microsoft's Phi 1.5B in the MMLU benchmark

It is interesting to see how Apple is embracing a true open-source philosophy - open data, open weight models, open training code. This initiative could be laying the groundwork for more extensive AI integration across Apple's product ecosystem.

By open-sourcing, Apple is positioning itself as a key player in the AI developer ecosystem and as a forward-thinking leader in the open-source AI space, with potential long-term implications for the entire tech industry.

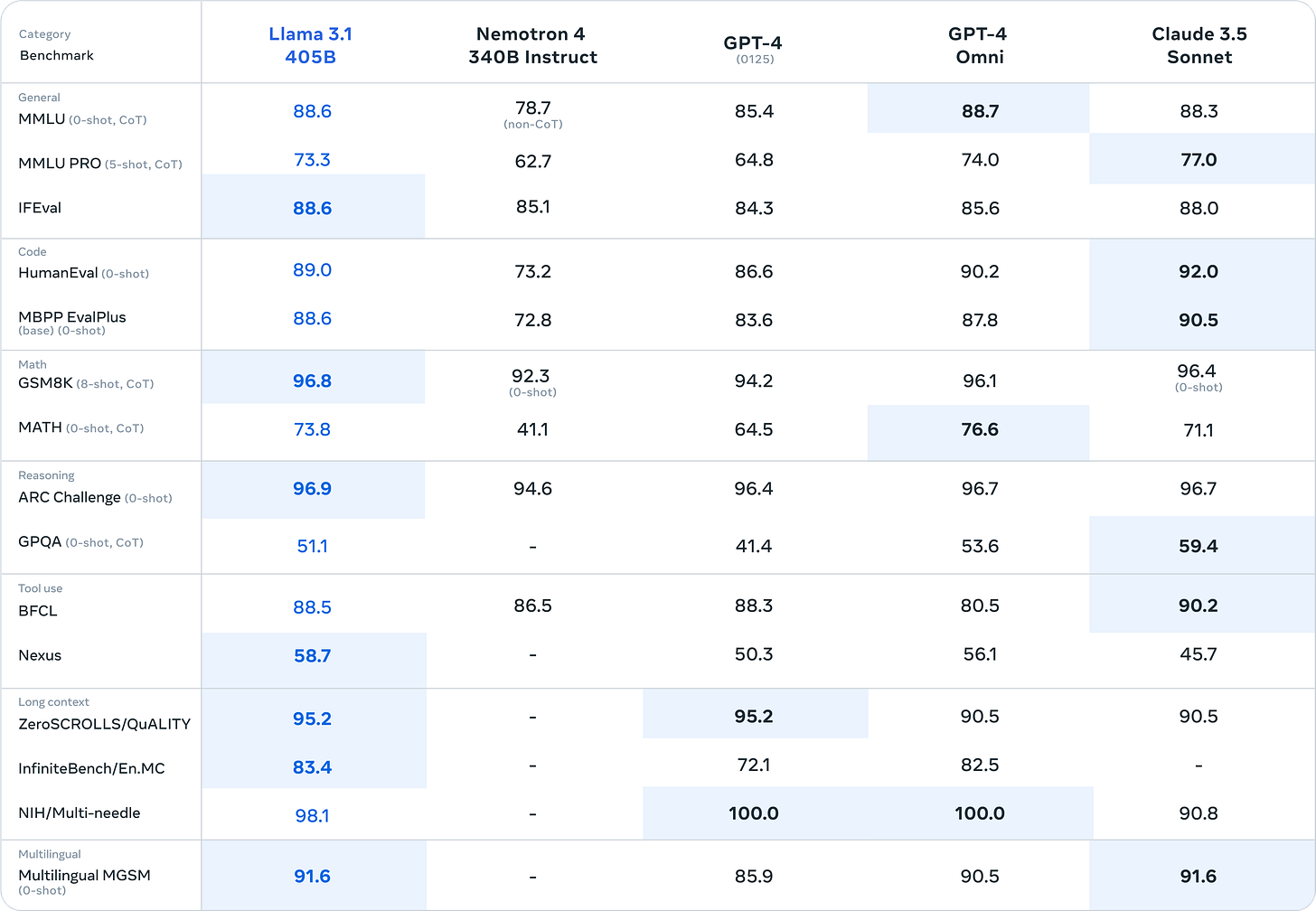

Meta's Llama 3.1

With the release of Llama 3.1, open source LLMs have reached new heights, matching the performance of the best proprietary models. Llama 3.1 has three versions - 405B, 70B and 8B models, with a context length of 128,000 tokens, allowing for more comprehensive text analysis and complex reasoning tasks.

The 405B parameter version of Llama 3.1 is the “first frontier-level open source AI model” available, rivaling the capabilities of closed-source models like GPT-4 and Claude 3.5. It powerful tool for synthetic data generation, allowing users to create high-quality synthetic data to fine-tune smaller models, improving their accuracy across various domains.

Meta's rationale for open-sourcing Llama:

Ecosystem development:

Fosters a full ecosystem of tools, optimizations, and integrations

Prevents lock-in to a closed ecosystem

Competitive strategy:

Open-sourcing doesn't sacrifice significant advantage in a competitive field

Aims to become industry standard through consistent competitiveness and openness

Business model alignment:

Meta's revenue doesn't rely on selling AI model access

Open-sourcing doesn't undercut sustainability or research investment

Proven open-source track record:

Success with projects like Open Compute Project, PyTorch, and React

Long-term benefits from ecosystem innovations and standardization

Llama 3.1 comes with a comprehensive 92-page research paper, offering valuable insights for researchers and developers.

Seismic shift in the AI landscape

The recent deluge of open-source AI model releases, from tech giants to innovative startups, signals a seismic shift in the AI landscape. This is a strategic inflection point that could redefine how businesses approach AI development and deployment.

For businesses, the message is clear: the barriers to entry in AI are lowering, but the potential for innovation is skyrocketing. Those who can adapt to this new paradigm, focusing on clever implementation rather than sheer scale, will be well-positioned to thrive in the AI-driven future that's unfolding before us.

The open-source AI revolution is here, and it's reshaping not just technology, but the very fabric of how we interact with and leverage artificial intelligence in our daily lives and businesses. The future of AI is not just big; it's smart, efficient, and accessible to all.

If you enjoyed this week’s story of The AI Notebook, please consider: 📥 Subscribing for free weekly insights and 🔗 sharing with friends and colleagues who might find it valuable. Your support helps keep this newsletter brewing! ☕📚 See you next week!

☕ This month, I've been enjoying Harley Estate Pichia coffee from Blue Tokai Coffee Roasters.